Computer Vision

This Page is used to demonstrate all of the various Computer Vision projects I have completed in the past couple of years. Please use the arrows to go through the photos for each project.

Skin Detection

The first computer vision project I completed is a skin recognition application. The idea behind this project is to turn the pixels of the skin area of an individual or group of individuals in a photo to white and then transform everything else in the photo to black. The results are not perfect as clothing and lighting can minimize optimal results. The photo gallery below will show the original and augmented photos. I have only posted a couple of photos in order to protect the privacy of my participants in this experiment. The link to the repository where the code is stored can be seen below: Skin Detection Google Collab Document

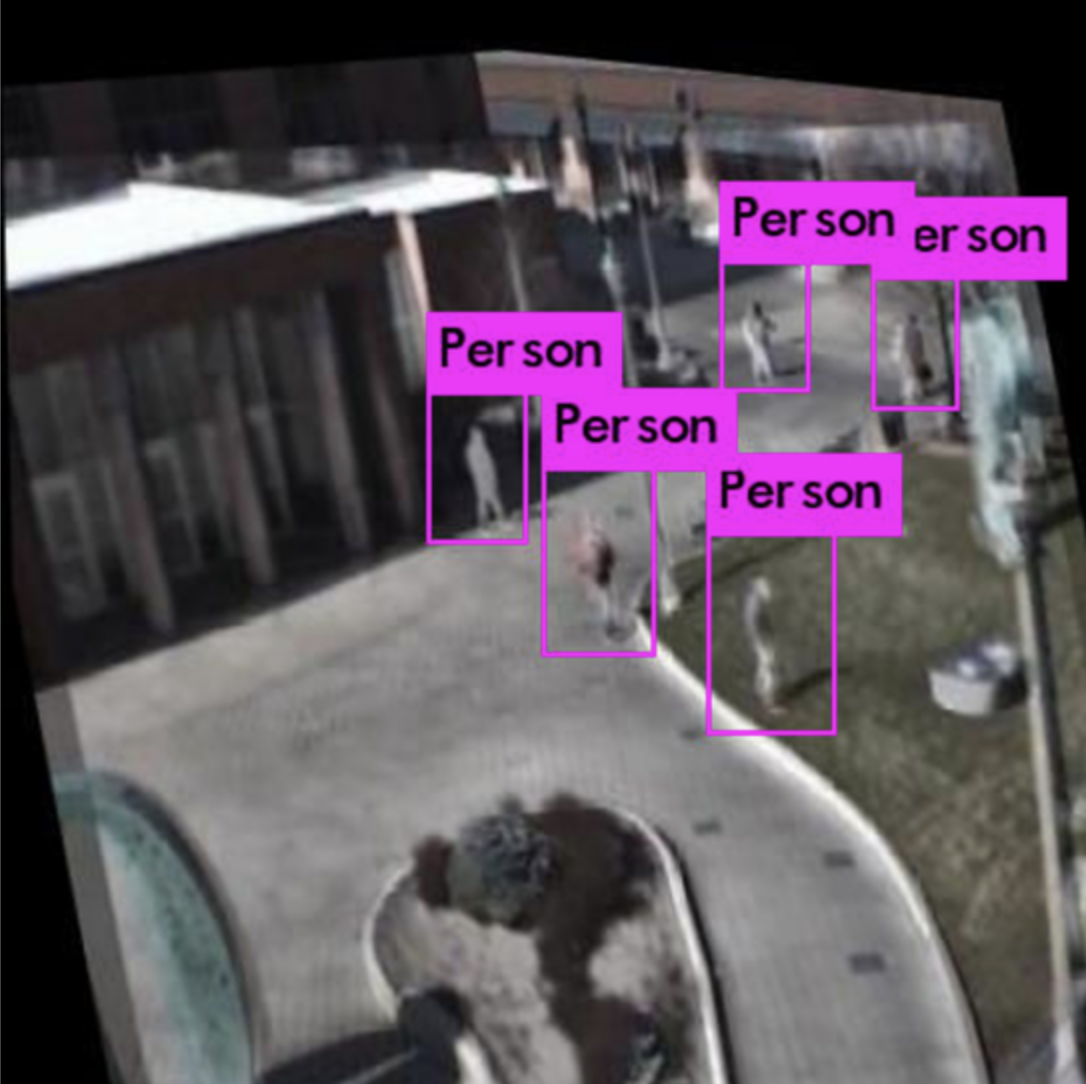

Search and Rescue

The second project I worked on is a search and rescue recognition detection algorithm. This project was conducted with four other individuals and used the Yolov4 algorithm to detect individuals in a mountainous region. The project was trained on three different datasets and the accuracy (mAP scores) for each dataset can be seen below. The paper for this project can be accessed through the hyperlink posted below. An Investigation Into Using Neural Networks to Detect People for Search and RescueOperations.pdf

- Thermal 98%

- Composite Datatset 98%

- RGB Dataset 98%

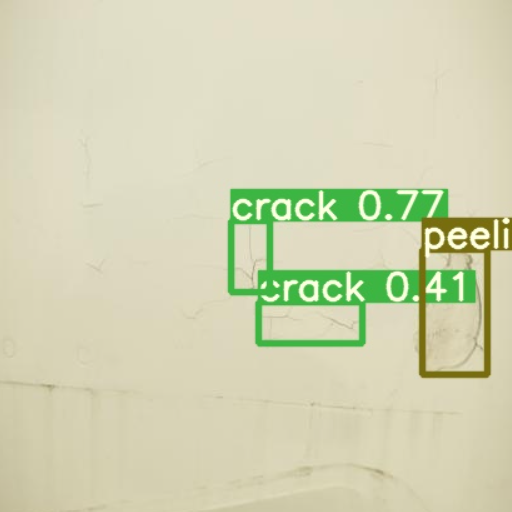

AirPlane Defect

The third project is an airplane defect detection and tracking algorithm. The airplane defect detection project focused on identifying five different defects (listed below), while the airplane tracking project focused on tracking water aircrafts as they travelled throughout the Vancouver Harbour Airport.

- Corrosion

- Missing Screws

- Peeling

- Crack

- Dent

Hand Gesture Recognition

In the final project, I created a hand gesture recognition system on an embedded device. The photos below illustrate my ability to detect unique hand gestures on a RasberryPi 4 at a frame rate of 60 frames per second. I then gathered and annotated the 1200 image dataset. The custom dataset was then trained in Google Collab, where it reached a 97% mAP score. The trained weights and configuration files was then converted to a blob file so the inference for the CNN (hand gesture algorithm) could occur on a Luxonis TPU. Having the inference run on a TPU, was the catalyst to achieve a 60 FPS rate. Lastly, the output from each frame was piped to the Rasberrypi where each output was matched with a given computer action. This matching allowed an individual to control the Rasberrypi with their hands. Hand Gesture Recognition Work